A data scientist has a Spark DataFrame spark_df. They want to create a new Spark DataFrame that contains only the rows from spark_df where the value in column discount is less than or equal 0.

Which of the following code blocks will accomplish this task?

Correct Answer:

C

To filter rows in a Spark DataFrame based on a condition, thefiltermethod is used. In this case, the condition is that the value in the "discount" column should be less than or equal to 0. The correct syntax uses thefiltermethod along with thecolfunction from pyspark.sql.functions.

Correct code:

frompyspark.sql.functionsimportcol filtered_df = spark_df.filter(col("discount") <=0)

Option A and D use Pandas syntax, which is not applicable in PySpark. Option B is closer but misses the use of thecolfunction.

References:

✑ PySpark SQL Documentation

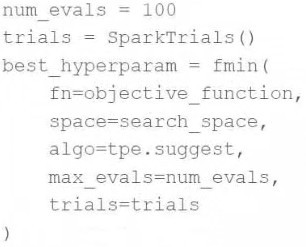

A data scientist wants to tune a set of hyperparameters for a machine learning model. They have wrapped a Spark ML model in the objective functionobjective_functionand they have defined the search spacesearch_space.

As a result, they have the following code block:

Which of the following changes do they need to make to the above code block in order to accomplish the task?

Correct Answer:

A

TheSparkTrials()is used to distribute trials of hyperparameter tuning across a Spark cluster. If the environment does not support Spark or if the user prefers not to usedistributed computing for this purpose, switching toTrials()would be appropriate.Trials() is the standard class for managing search trials in Hyperopt but does not distribute the computation. If the user is encountering issues withSparkTrials()possibly due to an unsupported configuration or an error in the cluster setup, usingTrials()can be a suitable change for running the optimization locally or in a non-distributed manner.

References

✑ Hyperopt documentation: http://hyperopt.github.io/hyperopt/

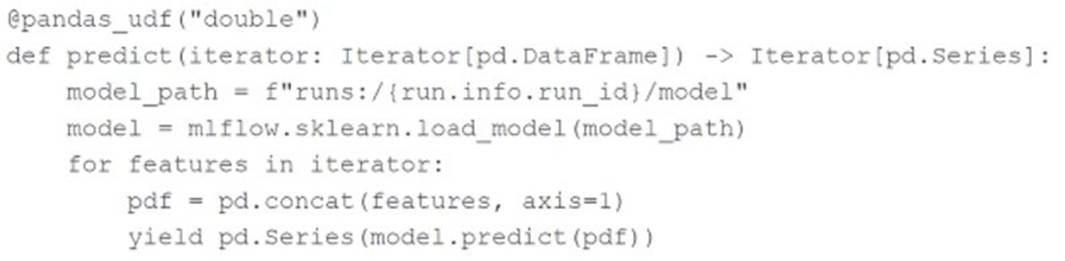

A machine learning engineer is using the following code block to scale the inference of a single-node model on a Spark DataFrame with one million records:

Assuming the default Spark configuration is in place, which of the following is a benefit of using anIterator?

Correct Answer:

C

Using an iterator in thepandas_udfensures that the model only needs to be loaded once per executor rather than once per batch. This approach reduces the overhead associated with repeatedly loading the model during the inference process, leading to more efficient and faster predictions. The data will be distributed across multiple executors, but each executor will load the model only once, optimizing the inference process. References:

✑ Databricks documentation on pandas UDFs: Pandas UDFs

A machine learning engineer has grown tired of needing to install the MLflow Python library on each of their clusters. They ask a senior machine learning engineer how their notebooks can load the MLflow library without installing it each time. The senior machine learning engineer suggests that they use Databricks Runtime for Machine Learning.

Which of the following approaches describes how the machine learning engineer can begin using Databricks Runtime for Machine Learning?

Correct Answer:

C

The Databricks Runtime for Machine Learning includes pre-installed packages and libraries essential for machine learning and deep learning, including MLflow. To use it, the machine learning engineer can simply select an appropriate Databricks Runtime ML version from the "Databricks Runtime Version" dropdown menu while creating their cluster. This selection ensures that all necessary machine learning libraries, including MLflow, are pre-installed and ready for use, avoiding the need to manually install them each time.

References

✑ Databricks documentation on creating clusters: https://docs.databricks.com/clusters/create.html

Which of the Spark operations can be used to randomly split a Spark DataFrame into a training DataFrame and a test DataFrame for downstream use?

Correct Answer:

E

The correct method to randomly split a Spark DataFrame into training and test sets is by using therandomSplitmethod. This method allows you to specify the proportions for the split as a list of weights and returns multiple DataFrames according to those weights. This is directly intended for splitting DataFrames randomly and is the appropriate choice for preparing data for training and testing in machine learning workflows.References:

✑ Apache Spark DataFrame API documentation (DataFrame Operations: randomSplit).