The implementation of linear regression in Spark ML first attempts to solve the linear regression problem using matrix decomposition, but this method does not scale well to large datasets with a large number of variables.

Which of the following approaches does Spark ML use to distribute the training of a linear regression model for large data?

Correct Answer:

C

For large datasets, Spark ML uses iterative optimization methods to distribute the training of a linear regression model. Specifically, Spark MLlib employs techniques like Stochastic Gradient Descent (SGD) and Limited-memory Broyden–Fletcher–Goldfarb–Shanno (L-BFGS) optimization to iteratively update the model parameters. These methods are well-suited for distributed computing environments because they can handle large-scale data efficiently by processing mini-batches of data and updating the model incrementally.

References:

✑ Databricks documentation on linear regression: Linear Regression in Spark ML

A data scientist wants to efficiently tune the hyperparameters of a scikit-learn model in parallel. They elect to use the Hyperopt library to facilitate this process.

Which of the following Hyperopt tools provides the ability to optimize hyperparameters in parallel?

Correct Answer:

B

TheSparkTrialsclass in the Hyperopt library allows for parallel hyperparameter optimization on a Spark cluster. This enables efficient tuning of hyperparameters by distributing the optimization process across multiple nodes in a cluster. fromhyperoptimportfmin, tpe, hp, SparkTrials search_space = {'x': hp.uniform('x',0,1),'y': hp.uniform('y',0,1) }defobjective(params):returnparams['x'] **2+ params['y'] **2spark_trials = SparkTrials(parallelism=4) best = fmin(fn=objective, space=search_space, algo=tpe.suggest, max_evals=100, trials=spark_trials)

References:

✑ Hyperopt Documentation

A data scientist has developed a linear regression model using Spark ML and computed the predictions in a Spark DataFrame preds_df with the following schema:

prediction DOUBLE actual DOUBLE

Which of the following code blocks can be used to compute the root mean-squared-error of the model according to the data in preds_df and assign it to the rmse variable?

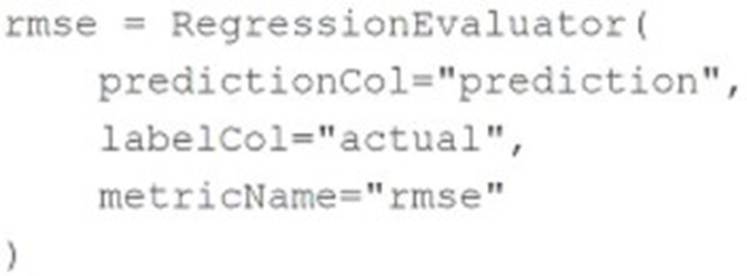

A)

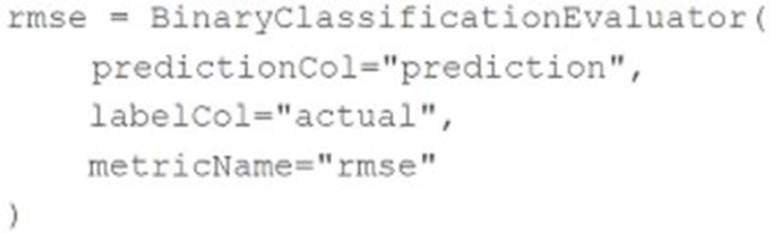

B)

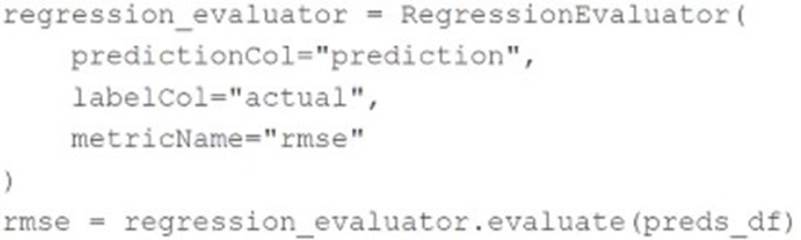

C)

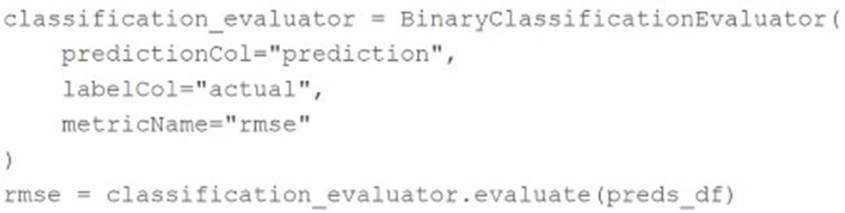

D)

Correct Answer:

C

To compute the root mean-squared-error (RMSE) of a linear regression model using Spark ML, theRegressionEvaluatorclass is used. TheRegressionEvaluator is specifically designed for regression tasks and can calculate various metrics, including RMSE, based on the columns containing predictions and actual values.

The correct code block to compute RMSE from thepreds_dfDataFrame is: regression_evaluator = RegressionEvaluator( predictionCol="prediction", labelCol="actual", metricName="rmse") rmse = regression_evaluator.evaluate(preds_df)

This code creates an instance ofRegressionEvaluator, specifying the prediction and label columns, as well as the metric to be computed ("rmse"). It then evaluates the predictions in preds_dfand assigns the resulting RMSE value to thermsevariable.

Options A and B incorrectly useBinaryClassificationEvaluator, which is not suitable for regression tasks. Option D also incorrectly usesBinaryClassificationEvaluator. References:

✑ PySpark ML Documentation

A health organization is developing a classification model to determine whether or not a patient currently has a specific type of infection. The organization's leaders want to maximize the number of positive cases identified by the model.

Which of the following classification metrics should be used to evaluate the model?

Correct Answer:

E

When the goal is to maximize the identification of positive cases in a classification task, the metric of interest isRecall. Recall, also known as sensitivity, measures the proportion of actual positives that are correctly identified by the model (i.e., the true positive rate). It is crucial for scenarios where missing a positive case (false negative) has serious implications, such as in medical diagnostics. The other metrics like Precision, RMSE, and Accuracy serve different aspects of performance measurement and are not specifically focused on maximizing the detection of positive cases alone. References:

✑ Classification Metrics in Machine Learning (Understanding Recall).

A data scientist is attempting to tune a logistic regression model logistic using scikit-learn. They want to specify a search space for two hyperparameters and let the tuning process randomly select values for each evaluation.

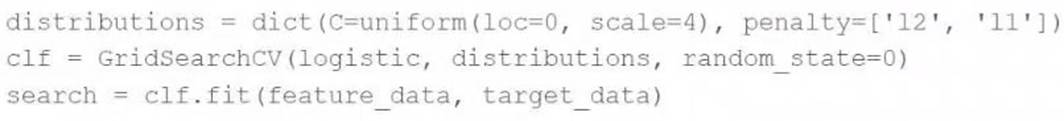

They attempt to run the following code block, but it does not accomplish the desired task:

Which of the following changes can the data scientist make to accomplish the task?

Correct Answer:

A

The user wants to specify a search space for hyperparameters and let the tuning process randomly select values.GridSearchCVsystematically tries every combination of the provided hyperparameter values, which can be computationally expensive and time-consuming.RandomizedSearchCV, on the other hand, samples hyperparameters from a distribution for a fixed number of iterations. This approach is usually faster and still can find very good parameters, especially when the search space is large or includes distributions.

References

✑ Scikit-Learn documentation on hyperparameter tuning: https://scikit- learn.org/stable/modules/grid_search.html#randomized-parameter-optimization